Between ethics and the law, who should regulate artificial intelligence systems?

We have all begun to realize that the rapid development of AI is truly going to change the world we live in. AI is no longer just a branch of computer science; it has broken out of research labs with the development of “AI systems”—“software that, for human-defined objectives, generates content, predictions, recommendations, or decisions that influence the environments with which they interact” (European Union definition).

Bernard Fallery, University of Montpellier

AI applications are everywhere, and they introduce new risks, including the manipulation of behavior. How can we regulate their use?

Pixabay,CC BY

The challenges of governing these AI systems—with all the nuances of ethics, control, regulation, and governance—have become crucial, as their development is now in the hands of a few digital empires such asGAFA-NATU-BATX…which have become the masters of fundamental societal choices regarding automation andthe “rationalization”of the world.

The complex interplay of AI, ethics, and law thus takes shape within the power dynamics—and collusion—between governments and tech giants. But citizen engagement is essential to champion alternatives to a technologicaldeterminismwhere “everything that can be connected will be connected and streamlined.”

AN ETHICS OF AI? KEY PRINCIPLES AT AN IMPASSE

Admittedly, these three majorethical principleshelp us understand how a genuine bioethics has developed since Hippocrates: the personal virtue of “critical prudence,” the rationality of rules that must be capable of being universal, and the assessment of the consequences of our actions in relation to the common good.

[More than 80,000 readers rely on The Conversation’s newsletter to better understand the world’s major issues.Subscribe today]

For AI systems, these guiding principles have also served as the foundation for hundreds of ethics committees: the Holberton-Turing Pledge,the Montreal Declaration,the Toronto Declaration, the UNESCO Program… and evenFacebook! Yet AI ethics charters have never yet led to any enforcement mechanism, or even the slightest reprimand.

On the one hand, the race for digital innovation is essential for capitalism to overcome the contradictions in profit accumulation, and it is essential for states to developalgorithmic governanceandunprecedented social control.

But on the other hand, AI systems are always both a cure and a poison (a pharmakon in Bernard Stiegler’s sense) and thus continually create different ethical situations that do not fall under principles but require “complex thinking”; a dialogic approach in Edgar Morin’s sense, as demonstrated bythe analysis of ethical conflicts surrounding the Health Data Hub.

A RIGHT OF AI? A CONCEPT STRADDING REGULATION AND LEGISLATION

Even if broad ethical principles will never be fully implemented, it is through critical discussion of these principles that a body of AI law may emerge. The law faces particular challenges in this area, includingthe scientific uncertaintysurrounding the definition of AI, the extraterritorial nature of the digital realm, and the rapid pace at which platforms developnew services.

In the development of AI law,two parallel trends can be observed. On the one hand, regulation through simple guidelines or recommendations aimed at the gradual legal integration of standards (from technology to law, suchas cybersecurity certification). On the other hand, there is genuine regulation through binding legislation (from positive law to technology, such as theGDPR regulationon personal data).

POWER DYNAMICS… AND COLLUSION

Personal data is often described as a highly coveted new "black gold," as AI systems rely heavily on massive amounts of data to fuel statistical learning.

In 2018, the GDPR became a comprehensive European regulation governing such data,capitalizing on two major scandals: the NSA’s PRISM surveillance program and the Facebook data breach involving Cambridge Analytica. The GDPR even enabled activist lawyer Max Schrems in 2020 to haveall transfers of personal data to the United Statesinvalidated by the Court of Justice of the European Union. But instances of collusionbetween governments and tech giantsremain numerous: Joe Biden and Ursula von der Leyen are constantly working to reorganize these data transfers, which are contested bynew regulations.

Today, the GAFA-Natu-Batx monopolies are shaping the development of AI systems: they control possible futures through “predictive machines” and the manipulation ofattention; they enforce the interdependence oftheir servicesand will soon push for the integration of their systems intothe Internet of Things. Governments are responding to this concentration of power.

In the United States, a lawsuit seekingtoforce Facebookto sell Instagram and WhatsAppis set to begin in 2023, and an amendment toantitrust legislationis expected to be passed.

In Europe, starting in 2024, the Digital MarketsAct (DMA) will regulate acquisitions and prohibit “major gatekeepers” from self-referencing or bundling their various services. As for the Digital ServicesAct (DSA), it will require “major platforms” to be transparent about their algorithms and to promptly address illegal content, and it will prohibit targeted advertising based on sensitive characteristics.

But collusion remains strong, as everyone also protects “their” tech giants by invoking the Chinese threat. Thus, under pressure from the Trump administration, the French governmentsuspended payment of its “GAFA tax”—even thoughit hadbeen passed by parliament in 2019—andtax negotiationscontinue within the framework of the OECD.

NEW AND INNOVATIVE EUROPEAN REGULATIONS ON THE SPECIFIC RISKS OF AI SYSTEMS

Spectacular advances in pattern recognition (whether in images, text, speech, or location data) are giving rise to predictive systems that pose growing risks to health, safety, and fundamental rights: manipulation, discrimination, social control, autonomous weapons… Following China’s regulation on the transparency of recommendation algorithms in March 2022, the adoption ofthe AIA Act—the European regulation on artificial intelligence—will mark a new milestone in 2023.

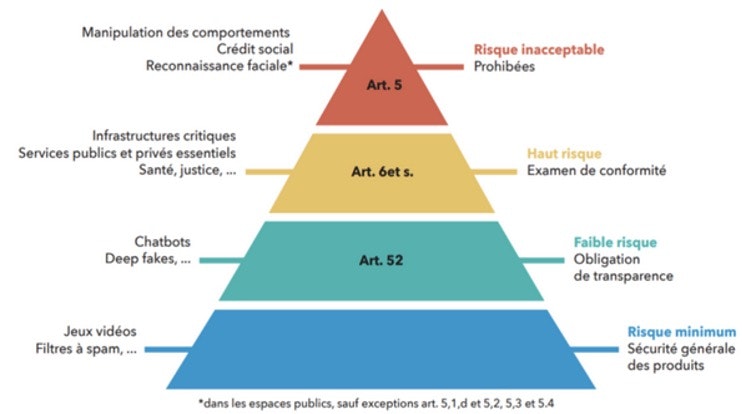

Yves Meneceur, 2021, Provided by the author

This groundbreaking legislation is based on therisk level ofAI systems, using a tiered approach similar to that for nuclear risks: unacceptable, high risk, low risk, and minimal risk. Each risk level is associated with prohibitions, obligations, or requirements, which arespecified in the annexesand are still being negotiated between theParliamentand theCommission. Compliance and sanctions will be monitored by the competent national authorities and the European Committee on Artificial Intelligence.

CITIZENS UNITE FOR AI RIGHTS

To those who view citizen engagement in the development of AI law as a utopian ideal, we might first point to the strategy of an organization likeAmnesty International: advancing international law(treaties, conventions, regulations, human rights courts) and then applyingitto concrete situationssuch as the Pegasus spyware case or the ban on autonomous weapons.

Another successful example is the movement None of your Business (it’s none of your business):advancing European law(GDPR, Court of Justice of the European Union, etc.) by filinghundreds of complaintseach year against privacy-violating practices by digital companies.

All these citizen groups, which are working to establish and exercise AI-related rights, take a wide variety of forms and approaches. From Europeanconsumerassociations filing joint complaints against Google’s account management, to 5G antenna saboteurs who reject the total digitization of the world, to Torontoresidentsthwarting Google’s majorsmart cityproject, and toactivistdoctors advocating for free software who want to protect health data…

This emphasis on various ethical imperatives—which are both conflicting and complementary—aligns well with thecomplex conceptionof ethics proposed by Edgar Morin, which accepts resistance and disruption as inherent to change.

Bernard Fallery, Professor Emeritus of Information Systems, University of Montpellier

This article is republished fromThe Conversationunder a Creative Commons license. Readthe original article.